Building a Real-World Chatbot with Snowflake Intelligence: Here’s What We Learned

24.11.2025How We Turned Unstructured Hospitality Content into an Intelligent Employee Assistant.

Modern hospitality operations run on massive volumes of internal content—brand standards, SOPs, training docs, process handbooks, legal guidelines, and regional variations. A single employee question like “What are the check-in rules for our location in Asia-Pacific?” can require digging through 5+ document types across multiple systems. That was the core problem we set out to solve.

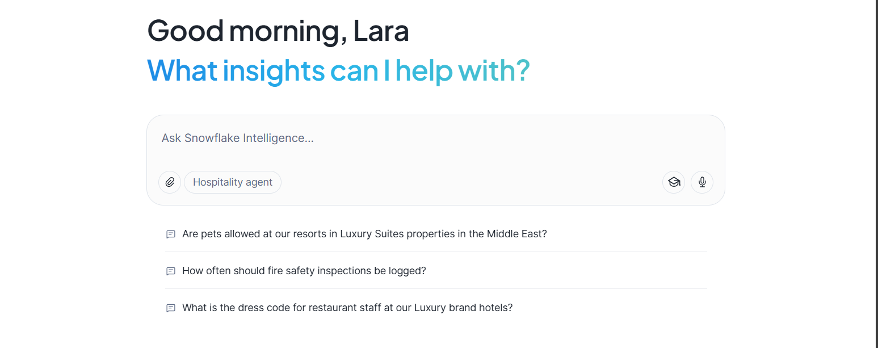

We built an internal chatbot powered entirely by Snowflake Cortex and Snowflake Intelligence, allowing employees to access accurate operational guidance instantly, across multiple global brands and regions.

The Challenge

The client operates hospitality properties worldwide, representing multiple sub-brands with differentiated standards. They needed:

- a single, searchable knowledge interface,

- built on top of their existing Snowflake environment,

- capable of understanding brand- and region-specific rules,

- and accurate enough to trust for daily operations.

Their documentation was spread across HTML pages, PDFs, Word documents, Excel workbooks, PowerPoints, and other formats—each with different structures, layouts, and terminology.

Architecture

Our approach used Snowflake end-to-end:

- Raw content was stored in an S3 bucket and exposed as an External Stage.

- We used PARSE_DOCUMENT to extract and normalize text from all file types.

One major advantage: PARSE_DOCUMENT outputs Markdown-formatted text, which preserves structure such as headings, bullet points, and subchapters. - After ingestion, all documents were chunked and embedded to create the knowledge base.

- Cortex Search retrieved the most relevant chunks.

- A Cortex Agent was configured with Cortex Search as a tool, handling reasoning and answer generation.

- The chatbot frontend was delivered through Snowflake Intelligence, providing a clean, business-ready interface that shows citations and reasoning steps—helping users build trust in the answers.

The result was a fully Snowflake-native RAG pipeline with no additional infrastructure, no external hosting, and no new data pipelines.

Testing Snowflake Intelligence

We tested multiple model configurations for:

- agent orchestration quality

- retrieval strength

- reasoning consistency

- response speed

Evaluation was done using LLM-as-a-Judge (Claude 3.5), scoring for factual correctness, relevance, and citation quality.

What we learned (so you don’t have to)

We tried a lot of tricks. Here are the ones that clearly moved the needle:

✅ 1. Adding brand and region metadata to each chunk was a game-changer

The hospitality sector is full of nuance: different regions, different legal rules, different operational policies.

To help the model interpret this correctly, we prepended plain-text metadata to each chunk:

Region: All Regions

Brand: Luxury Suites

Section: CHECK-OUT POLICY

Generally, guest check-out is at 12:00 PM local time. Late check-out requests are subject to availability and may incur additional charges. Properties are required to communicate fees and availability at the front desk and provide written confirmation when required by local regulations. Guests with elite membership tiers may be eligible for complimentary extensions based on occupancy and region-specific guidelines.

This simple technique dramatically improved precision:

- If a question referenced a specific brand or region, the agent filtered correctly.

- If the user did not specify, the answer stated whether a rule was global or only applied to certain markets.

Even without structured metadata fields, this lightweight approach produced measurable accuracy gains.

✅ 2. Claude 3.5 outperformed GPT-5 for agent orchestration

On average:

- Claude 3.5 produced ~10% more accurate responses,

- but its responses were slower by ~4 seconds on median queries.

✅ 3. Chunk size mattered less as expected

We experimented with multiple chunking strategies:

- PDFs / Word documents: 2,000–3,000 characters (200–300 overlap)

- HTML: 1,500–3,000 characters (150–300 overlap)

- PowerPoints: 800-1000 characters with ~100–150 character overlap

Keeping chunks on the smaller end slightly reduced accuracy (≈3–5%).

Final choice: 3,000 characters for PDFs and HTML delivered the best balance of precision and retrieval performance.

Conclusion

Employees now get instant, source-cited answers to operational questions using data the company already stores in Snowflake.

Snowflake Cortex with Snowflake Intelligence made it possible to build an end-to-end solution, go from raw, messy documents to an intelligent, compliant employee assistant—no additional infrastructure, no new data pipelines, and no external model hosting.

Senior Data Scientist